A watt (W) is the unit used to measure electrical power. It tells you how much energy a device uses or produces at any given moment.

For most homeowners, watts only come up when looking at appliance labels or electricity bills. That’s where confusion starts. It’s common to mix up watts with volts or amps, or to underestimate how small differences in power consumption can add up over time.

This becomes even more relevant when you start comparing appliances, planning energy use, or considering solar. A device that runs at a higher wattage will generally consume more electricity, which directly impacts your monthly bill.

This guide breaks it down in a practical way. You’ll see what watts actually measure, how the formula works, and how to translate that number into real costs at home. By the end, it’s easier to understand what you’re paying for and where you can optimize energy use.

What is a Watt?

A watt (W) measures electrical power. In simple terms, it shows how fast electricity is being used or generated at a specific moment. Every appliance in your home operates within a specific wattage range, which helps define both its performance and energy consumption.

What does Watt measure?

A watt measures the rate of energy use. It tells you how much electrical energy a device consumes per second while it’s running.

For example, a 100 W light bulb uses 100 joules of energy every second. The higher the wattage, the more energy the device needs to operate.

What does 30 Watts mean?

If a device is rated at 30 W, it means it consumes 30 watts of power while in use.

In practical terms, that’s relatively low consumption. Devices like LED bulbs, small fans, or routers often fall into this range. Over time, though, even 30 W adds up, depending on how many hours the device stays on.

What is the difference between Watts and Volts?

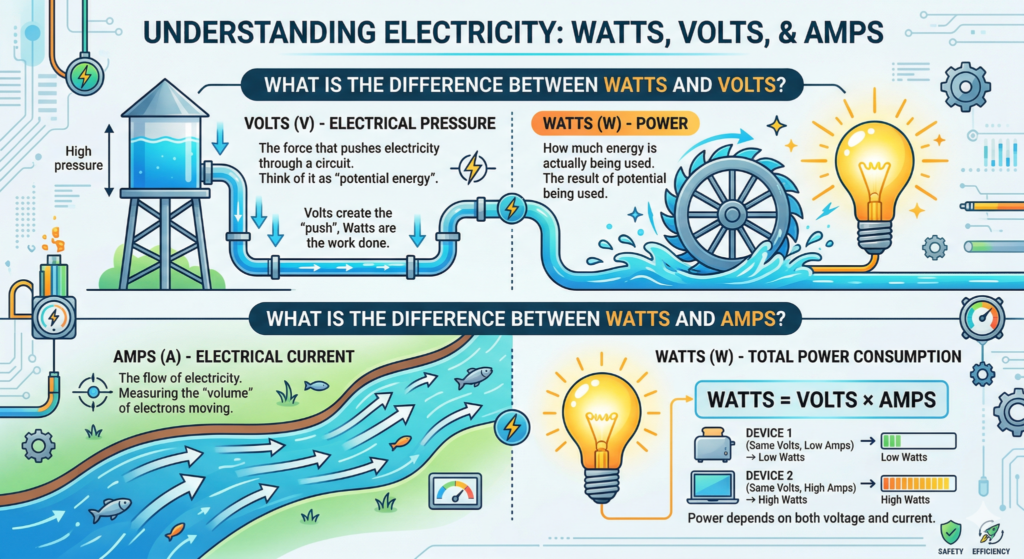

Watts and volts measure different aspects of electricity.

- Volts (V) measure electrical pressure, the force that pushes electricity through a circuit

- Watts (W) measure power, how much energy is actually being used

You can think of volts as the potential, and watts as the result of that potential being used.

What is the difference between Watts and amps?

Watts and amps are also closely related, but they represent different things.

- Amps (A) measure electrical current, the flow of electricity

- Watts (W) measure total power consumption

They connect through a simple relationship: power depends on both voltage and current. That’s why two devices with the same voltage can have very different wattages depending on how much current they draw.

Watts formula

Watts are calculated by combining voltage and current. This relationship shows how electrical systems actually deliver power to a device.

P = V \times I

Here, power (P) is measured in watts, voltage (V) in volts, and current (I) in amps.

If you know any two of these values, you can calculate the third.

How many Watts is 220 volts?

Voltage alone doesn’t define wattage. You also need to know the current (amps).

Using the formula:

- 220 V × 1 A = 220 W

- 220 V × 5 A = 1,100 W

- 220 V × 10 A = 2,200 W

So, 220 volts can represent very different power levels depending on how much current the device draws. That’s why appliances connected to the same voltage can still have very different energy consumption.

What is 1 kWh in Watts?

A kilowatt-hour (kWh) measures energy over time, not instantaneous power.

- 1 kW = 1,000 watts

- 1 kWh = 1,000 watts used for 1 hour

So if you run a 1,000 W appliance for one hour, it consumes 1 kWh of energy.

If the same device runs for 30 minutes, it uses 0.5 kWh. If it runs for 2 hours, it uses 2 kWh.

This is the unit your electricity bill uses, which is why understanding the relationship between watts and time helps you estimate real costs at home.

Who invented Watts?

The watt is named after James Watt, a Scottish engineer known for improving the steam engine in the 18th century. His work made it easier to measure and compare power practically, especially in industrial settings.

Over time, his name became the standard unit for power in the International System of Units (SI). Today, watts are used across everything from household appliances to large-scale energy systems, including solar installations.

How is Watts used for?

Watts show up anywhere electricity is involved. They help you understand how much power a device needs to operate or how much energy a system can deliver.

At home, watts are used to:

- Compare appliances, like choosing between a 60 W and a 10 W light bulb

- Estimate energy consumption over time

- Understand electricity bills, since higher wattage usually means higher energy use

In solar systems, watts are used to describe:

- Panel capacity, for example, a 400 W solar panel

- System size, such as a 5 kW residential setup

- Expected energy production under certain conditions

This makes watts a key reference point when deciding how to manage energy use or evaluate whether solar could meet your household’s demand.

How do Watts affect electricity bills?

Your electricity bill is based on how much energy you use over time, measured in kilowatt-hours (kWh). Watts play a direct role in that calculation because they define how much power each device consumes while it’s running.

The higher the wattage and the longer the usage, the more you pay. That’s why understanding watts helps you identify where your energy costs are coming from and where you can reduce them.

How many Watts does a house use?

There isn’t a single number that fits every home. Total watt usage depends on:

- The size of the house

- Number of appliances

- Usage habits throughout the day

A small home might run on 3,000 to 5,000 watts at a given moment, while larger homes with air conditioning, electric showers, or multiple appliances running at once can exceed 10,000 watts.

What matters most is not just peak usage, but how long these loads stay active during the day.

How can I calculate the wattage of a device?

You can find wattage in three main ways:

- Check the label on the device (usually listed in watts or amps and volts)

- Use the formula: Watts = Volts × Amps

- Look up the model specifications online

If a device only shows amps and volts, multiplying both gives you the wattage. This helps estimate how much energy it will consume over time.

How many Watts does a typical household appliance use?

Here’s a general idea of common household consumption:

- LED light bulb: 5 to 15 W

- Refrigerator: 100 to 400 W

- Microwave: 800 to 1,500 W

- TV: 50 to 200 W

- Air conditioner: 1,000 to 3,500 W

These numbers vary by model and efficiency, but they show how quickly high-wattage appliances can impact your electricity bill, especially when used for long periods.

How many Watts do solar panels produce?

Solar panels are rated in watts based on how much power they can produce under ideal conditions. This is called peak power or “rated wattage.”

Most residential solar panels today fall within a range of:

- 350 W to 550 W per panel

A higher wattage panel can generate more electricity in the same amount of sunlight, which helps reduce the number of panels needed for your system.

In real-world conditions, output varies throughout the day. Factors that affect production include:

- Sunlight intensity and hours of exposure

- Roof orientation and tilt

- Shading from trees or nearby buildings

- Temperature and system efficiency

For example, a 400 W panel might produce around 1.6 to 2.0 kWh per day in a location with good sunlight. Over a month, that adds up to roughly 48 to 60 kWh per panel.

When planning a solar system, installers use your household energy consumption (in kWh) to estimate how many panels you need. The goal is to match, or partially offset, your monthly electricity usage based on available roof space and local conditions.

FAQs

How many Watts does a light bulb use?

It depends on the type of bulb. Traditional incandescent bulbs usually range from 40 W to 100 W. LED bulbs are much more efficient and typically use between 5 W and 15 W to deliver the same level of brightness.

Switching to LEDs is one of the simplest ways to reduce energy consumption without changing how you use lighting.

How many Watts does a refrigerator use?

Most refrigerators operate between 100 W and 400 W while running. However, they don’t stay on at full power all the time. The compressor cycles on and off, so actual energy use depends on efficiency, size, and how often the door is opened.

Newer models tend to consume less power due to improved insulation and compressor technology.

How many Watts does a microwave use?

Microwaves typically range from 800 W to 1,500 W. Higher wattage models cook food faster, but they also draw more power while in use.

Since microwaves are used for short periods, their overall impact on your electricity bill is usually lower compared to appliances that run continuously.

How many Watts does a TV use?

TV power consumption varies by size and technology. Most modern TVs fall within:

- 50 W to 200 W

LED TVs tend to be more efficient, while larger screens and higher brightness settings increase consumption. Streaming for several hours a day can add up, especially on bigger models.

How many Watts does a computer use?

It depends on the type of computer and how it’s used:

- Laptops: 30 W to 100 W

- Desktop computers: 150 W to 500 W

Gaming PCs or high-performance setups can go even higher under heavy load. On the other hand, basic tasks like browsing or writing use much less power.

What are Peak Watts vs Running Watts?

These terms describe how much power a device uses at different moments:

- Peak watts refer to the maximum power a device needs, usually when starting up

- Running watts refer to the normal power consumption during operation

Appliances with motors, like refrigerators or air conditioners, often require a higher surge of power at startup. This difference matters when sizing generators or solar systems, since they need to handle both the startup demand and continuous usage.

Final Words

Watts give you a clear view of how electricity is used in your home. From understanding what a watt measures to applying the formula and comparing appliances, it all comes down to one thing: how much power you use and for how long.

Once you connect watts to kWh, it becomes easier to see where your electricity bill is coming from. High-wattage devices, long usage hours, and inefficient appliances all play a role. The same logic applies when evaluating solar. Panel wattage, system size, and daily production determine how much of your consumption you can offset.

If you’re paying closer attention to your energy usage now, the next step is to understand how that translates into a solar setup for your home. A good starting point is to see how many panels you might actually need based on your consumption.